To continue the ongoing plan to conquer the world via our autonomous robot, several enhancements were made to the robotic platform and a new path finding algorithm was tested.

Hardware Changes

After deploying the robot around the office, it became clear that the Bluetooth wireless module used for communication would not provide sufficient range and coverage to give the robot full mobility about DMC’s office. With this in mind, a new wireless communication method was sought. After researching several possibilities, DMC decided on the use of a Wi-Fi enabled USB hub

Wireless USB Hub

The IO Gear Wireless 4-Port USB Sharing Station is a USB hub that attaches to your local Wireless-G network and allows any computer on the network to connect to one of the USB devices on the hub as if it were directly connected to the computer.

By using this device, the host computer is now able to send commands to the IRobot Create using the standard USB to Serial adapter from anywhere in the office that has Wi-Fi coverage. Likewise, the robot is now able to venture anywhere in the office without losing connection to its host.

Additionally, since the hub supports up to 4 USB devices it allows future expansion of the platform to accommodate additional hardware (did I hear someone say USB Nerf launcher?).

Power Brick

In order to incorporate the hub onto the IRobot Create, it was necessary to acquire a 12 V regulated power source. Since the IRobot only has unregulated battery voltage (Up to 14.5V) and low current 5V outputs, it was necessary to assemble a power block to run the USB station. It was also decided to incorporate a 5V power supply for the wireless camera into the same brick.

A 5V 3A regulator was purchased from Pololu along with a 12V regulator and project box from Battery Space. Once assembled, the power brick provides outputs to both the USB hub and camera as well as a removable power connection to the Create.

(The power brick has an input plug for unregulated battery voltage and two output cables with 5V and 12V)

Automated Programs

Along with the hardware changes, a new automated path recognition program was tested on the robot. This is an addition to the basic line following algorithm originally implemented. Both provide separate ways for the robot to navigate around the office as detailed below.

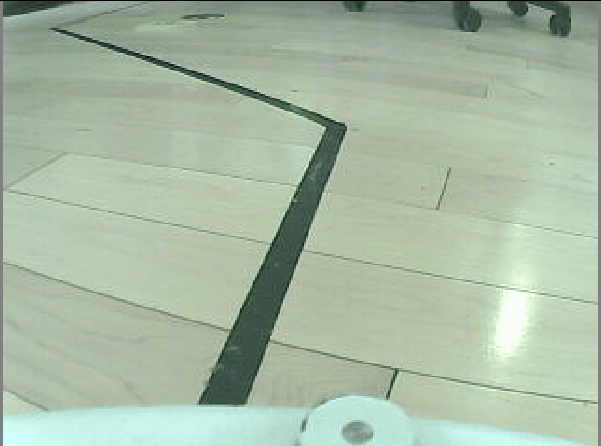

Line Following

The first automated program implemented on DMC’s robot was a simple line following algorithm. This algorithm uses several image algorithms in succession to isolate a dark line in the robot's view and command the robot to follow it.

Grayscale

This algorithm simply converts the color image to grayscale in order to more easily filter the image and isolate the dark line.

Adaptive Threshold

After converting the image to grayscale, the adaptive threshold module converts all the pixels to either black or white depending on their contrast with respect to their neighboring pixels. This helps isolate the black line from the rest of the image even if there are shadows or uneven lighting conditions.

Erode

Once the high contrast parts of the image are isolated, an erode function is performed which essentially reduces the size of the black parts of the image. This helps to reduce any noise, small high contrast parts of the image, and any small connections between large sections of black.

Blob Filter

Once we have obtained our isolated blobs, we filter them out to find the one corresponding to our line. This filtering removes blobs that are smaller than a size of 1000 and favors blobs that are closer to the center horizontally and the bottom of the image.

Once we have obtained our line through the above algorithms, we can control our robot based on the location of the line in our camera image. If the center of the line is to the left or right, we can turn our robot and continue moving further. If we no longer can see a line, we will turn the robot in the circle until it finds the line again. The resulting behavior is that the robot will follow a line until it reaches the end and then turn around 180 degrees and start following the line again.

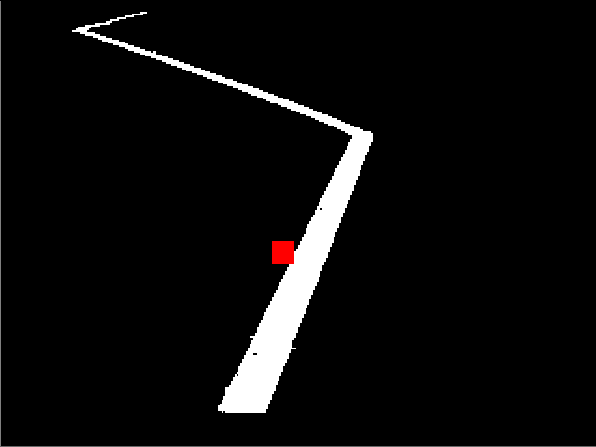

(The red dot in the image on the right indicates the center weight of the line which is the target of the robot)

Obstacle Detection

In addition to line following, an attempt was made at obstacle detection and automated path detection. This was done by applying image algorithms in an attempt to identify obstacles in the robot's path and command the robot to travel towards the farthest away location with no obstacles. The obstacle detection used a combination of several algorithms to identify obstacles. It then applied a mask on the image blocking any paths behind the identified obstacles resulting in a location farthest from the robot to approach.

Below is an example image from the obstacle detection along with the computed end location.

This method proved to be unsuccessful in its current configuration due to the similarity between the floors and walls of the office. Further attempts at automated path finding are currently being tested.